How to write/compose a Job description for web scraping to achieve your goal with minimal line of code?

What is a web scraping?

Web scraping, web harvesting, or web data extraction is data scraping used for extracting data from websites. Web scraping software may access the World Wide Web directly using the Hypertext Transfer Protocol, or through a web browser. While web scraping can be done manually by a software user, the term typically refers to automated processes implemented using a bot or web crawler. It is a form of copying, in which specific data is gathered and copied from the web, typically into a central local database or spreadsheet, for later retrieval or analysis.

Web scraping a web page involves fetching it and extracting from it. Fetching is the downloading of a page (which a browser does when you view the page). Therefore, web crawling is a main component of web scraping, to fetch pages for later processing. Once fetched, then extraction can take place. The content of a page may be parsed, searched, reformatted, its data copied into a spreadsheet, and so on. Web scrapers typically take something out of a page, to make use of it for another purpose somewhere else. An example would be to find and copy names and phone numbers, or companies and their URLs, to a list (contact scraping).

What you can do with data scraping?

Web scraping is used for content scraping, and as a component of applications used for web indexing, web mining and data mining, online price change monitoring and price comparison, product review scraping (to watch the competition), gathering real estate listings, weather data monitoring, website change detection, research, tracking online presence and reputation, web mashup and, web data integration.

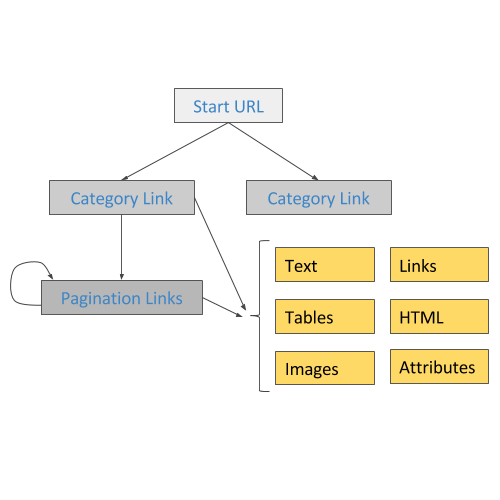

Using data scraping you can build sitemaps that will navigate the site and extract the data. Using different type selectors you will navigate the site and extract multiple types of data - text, tables, images, links and more.

What role scraper should play for you?

Web scraping is the process of automatically mining data or collecting information from the World Wide Web. It is a field with active developments sharing a common goal with the semantic web vision, an ambitious initiative that still requires breakthroughs in text processing, semantic understanding, artificial intelligence and human-computer interactions. Current web scraping solutions range from the ad-hoc, requiring human effort, to fully automated systems that are able to convert entire web sites into structured information, with limitations.

Below are the ways for scraping data:

- Human Copy Paste : Sometimes even the best web-scraping technology cannot replace a human’s manual examination and copy-and-paste, and sometimes this may be the only workable solution when the websites for scraping explicitly set up barriers to prevent machine automation.

- Text Pattern Matching : A simple yet powerful approach to extract information from web pages can be based on the UNIX grep command or regular expression-matching facilities of programming languages

- HTTP programming : Static and dynamic web pages can be retrieved by posting HTTP requests to the remote web server using socket programming.

- HTML parsing : Many websites have large collections of pages generated dynamically from an underlying structured source like a database. Data of the same category are typically encoded into similar pages by a common script or template. In data mining, a program that detects such templates in a particular information source, extracts its content and translates it into a relational form, is called a wrapper. Wrapper generation algorithms assume that input pages of a wrapper induction system conform to a common template and that they can be easily identified in terms of a URL common scheme.Moreover, some semi-structured data query languages, such as Xquery and the HTQL, can be used to parse HTML pages and to retrieve and transform page content.

- DOM parsing: By embedding a full-fledged web browser, such as the Internet Explorer or the Mozilla browser control, programs can retrieve the dynamic content generated by client-side scripts. These browser controls also parse web pages into a DOM tree, based on which programs can retrieve parts of the pages.

- Vertical aggregation : There are several companies that have developed vertical specific harvesting platforms. These platforms create and monitor a multitude of “bots” for specific verticals with no "man in the loop" (no direct human involvement), and no work related to a specific target site. The preparation involves establishing the knowledge base for the entire vertical and then the platform creates the bots automatically. The platform's robustness is measured by the quality of the information it retrieves (usually number of fields) and its scalability (how quick it can scale up to hundreds or thousands of sites). This scalability is mostly used to target the Long Tail of sites that common aggregators find complicated or too labor-intensive to harvest content from.

- Semantic annotation recognizing : The pages being scraped may embrace metadata or semantic markups and annotations, which can be used to locate specific data snippets. If the annotations are embedded in the pages, as Microformat does, this technique can be viewed as a special case of DOM parsing. In another case, the annotations, organized into a semantic layer,are stored and managed separately from the web pages, so the scrapers can retrieve data schema and instructions from this layer before scraping the pages.

- Computer vision web-page analysis : There are efforts using machine learning and computer vision that attempt to identify and extract information from web pages by interpreting pages visually as a human being would.

Key Features of Web Scraping

In order to remain competitive, businesses must be able to act quickly and assuredly in the markets. Web Scraping plays a big role in the development of various business organizations that use the services.

The benefits of these services are:

- Low Cost: Web Scraping service saves hundreds of thousands of man-hours and money as the use of scraping service completely avoids manual work.

- Less Time: Scraping solution not only helps to lower the cost, it also reduces the time involved in data extraction task. This tool ensures and gathers fast results required by people.

- Accurate Results: Web Scraping solutions help to get the most accurate and fast results that cannot be collected by human beings. It generates correct product pricing data, sales leads, duplication of online database, captures real estate data, financial data, job postings, auction information and many more.

- Time to Market Advantage: Fast and accurate results help businesses to save time, money and labor and get an obvious time-tomarket advantage over the competitors.

- High Quality: A Web Scraping solution provides access to clean, structured and high quality data through scraping APIs so that the fresh data can be integrated into the systems.

Finding and hiring expert scraper/crawler

It’s important to note that not all scraper will be ideal fits for every project. For example, those with highly analytical backgrounds in software engineering would be ideal for developing algorithms but may not be the right fit for a data scraping project. That’s why it’s so important to understand what type of scraping expert will bring the most benefit to your company and business goals.

Here are some questions to consider:

What is the overall learning you hope to find?

By including your goal in the project description, it allows professionals to better understand what type of work is required.

What core skills will scraping experts need to complete the project?

The answer will revolve around your current data infrastructure and the processes used to extract information.

Would you benefit from someone with highly specialized skills in a few areas of data scraping, or would a well-rounded expert serve you better?

Are there any time constraints to consider with this project?

Let professionals know the amount of hours of work that might be involved.

What kind of budget will this project have?

The more experience and expertise a data scraper has, the higher they expect to be compensated. Higher budgets will more likely give top-tier experts a reason to submit a proposal.

Web scraping project template

Below is a sample of how a project description may look. Keep in mind that many people use the term “job description,” but a full job description is only needed for employees. When engaging a freelancer as an independent contractor, you typically just need a statement of work, job post, or any other document that describes the work to be done.

<Job/Project Title>

ABC Company is looking for a web scraping expert to help us study our website traffic patterns and find areas of improvement. This project is estimated to require approximately 20-25 hours per week for the next few months to achieve the following goals

- Reporting findings in a weekly summary

- Split testing underperforming pages and recording results

- Discovering which pages currently perform best

- Organizing site data into spreadsheets

The following skills are required:

The ideal freelancer will be a creative problem solver with an excellent work history on Toogit. To submit a proposal, please send a short summary of similar projects you’ve completed and why we should consider you for this project.

- Excellent technical abilities

- Knowledge of quantitative split testing

- Experience with WordPress and Google Analytics

- A thorough understanding of MySQL databases

- Expertise or extensive experience with Python

Hiring the right Web Scraping talent

Remember that technical ability is only a small portion of what makes an excellent web scraper. Great web scrapers are inquisitive—they want to ensure that they’re seeking the right types of answers, plus they’ll take an interest in your business to better understand it. The ideal professional will also be able to advise you on additional metrics to analyze and compare in order to help you meet your goals.

Also, keep in mind that communication is always a key consideration in the data science field. A brief interview can allow you to gauge how strong each professional is in expressing ideas and explaining their process. The more you speak to each professional by phone, email, or chat, the better you’ll be able to gauge their professionalism and communication skills and determine whether they’re right for your project.